Notice that the safety stops are touching the CP in this case. At the time of the installation of this CP into its mount it was decided to keep them touching for safety reasons given the limited quality of the HCB on one side. We will realize if this measure was actually needed once the CP will be dismounted. Fig. 2, notice that in case of NI, the CP was very firmly fized into its mount and teh HCB strongly acted also in between the metal and the glass, the on both sides in order to detach the mount the fused silica ears underwent to break.

On Tuesday 9/6 morning I was engaged in WI payload works. In the morning with the PAM alignment and in the afternoon with the WI payload extraction.

At 18.30, after the work I visited the status of NI payload, as planned, to have a look and complete the assembly work today.

Unfortunately I saw that two fibers were broken. Luca came and took a look on the system. We do not know when the breaking happened.

The ear appears damaged, rather severely.

Today, with Luca, the payload cage was partially dismounted to allow a better access for inspection.

Helios and Flavio in the afternoon of today inspected. Further entries will follow.

Critical points:

- breaking during the assembly or just after are extremely rare

- availability of spare units for anchors

- clean detachment of 3 anchors without damaging the ears

Further entries will follow

The WI payload was then re-wrapped and is currently stored into the CB clean room.

I have released a new version v10r3p4 of VirgoProcessMonitoring, that improves the browsing of the configuration file history. All instances have been restarted to use this new version.

The WI PAM activity has been finished yesterday, and the laser used for that activity was switched off yesterday (Tuesday) at 9:41 UTC as reported in https://logbook.virgo-gw.eu/virgo/?r=69194

ITF found DOWN in UPGRADING mode.

Planned activities communiucated to the control room.

- - Alignment GUI test Al9 (Boldrini, Fiori, Seder)

- - ENV: activitiy for NI instrumented baffle TF measurement (Tringali, Spinicelli, Fiori) in progress...

- - PAY: WI CP mount removal (Majorana, Naticchioni) in progress...

- - TCS: WI PAM actuator position reference and movement, plus viewport external inspection (Corubolo, Nardecchia, Lumaca, Pasqualetti, Gherardini, Francescon)

- - INF: Confined space safety training (Zaza, Gherardini, Fabozzi) in progress in CEB clean rooms

- - DAQ: 15:08 UTC - DMSserver restarted under request of Loic (for PCal flags)

This morning (09:00 - 10:00 LT), we went to the WI base tower to take reference pictures and measurements of the PAM actuator position (see Fig. 1).

Afterwards, we contacted the vacuum team to understand how much clearance is needed for the future replacement of the viewport. We agreed that moving the PAM actuator to a parking position on the base tower would provide sufficient clearance for the planned work. (see Fig. 2).

Finally, we took some pictures of the viewport to be replaced, as seen from the outside (Fig. 3). From the outside, the smaller viewport does not show the same signs of degradation as the viewport to be replaced. In particular, no sparkling/glittering features are visible, which would suggest dust or coating flaking, as observed on the damaged viewpor t (69192).

The OLTF of the BPL was measured after the bench was suspended. With the loop closed, a color noise was injected just before the control filter with a 0.01 V amplitude.

The measured UGF is 27 +/- 1 Hz, and the phase margin is 67 +/- 2 deg for all degree of freedom.

The cellophane will be unwrapped and after this partial dismount the payload will be wrapped again.

The WI payload was then re-wrapped and is currently stored into the CB clean room.

WE tested the newest version of the alignment GUI by sending commands to the WE mirror.

We tested the step movement, the ramp movement and the snail function, everything checks out.

We restored the original position of the mirror after the test.

For the record: the new steering mirror was clean when packed LAPP, and was still clean when unpacked at NE building. However, after the installation (of the mirror, tuning the angle, and of the sphere), there was some dust deposited on the mirror. Despite that we Paul and myself were wearing head cover, face mask, glasses, gloves and clean white garnment. Paul has cleaned the mirror, with a tissue.

The fibers are loaded at 50% and intact. The payload is protected by the cellophane wrapping

This morning we went at NE to install the new steering mirror and the Rx sphere. The NE PCal is finally on again since 14H UTC. See the last figure with the VIM plot showing the relative calibration of the three power sensors of the NE Pcal.

Note that the responsivity (gain) of the Rx sphere has not been changed, and the gains of the Tx photodiodes neither. They are kept to be the same as during O4. If the NE PCal mis-calibration by 0.9% during O4 was due to optical losses in the M4 mirror, we were expecting that the power seen by Rx would increase by 0.9% compared to the other two photodiodes.

As a first remark, it seems that the relative calibration of Rx sphere and Tx_PD2 is similar than during O5, at better than 0.1%, while the relative calibration of Tx_PD1 has changed by ~0.5%. However, it is difficult to disantangle which sensor calibration has varied. A full recalibration based on WSV or a comparison with the NCal will be necessary to get useful information.

**********************************

Pictures 1 to 5 show the NE PCal Rx bench with:

- the new mirror (Thorlabs BB1-E03), put with aoi of about 20-25°. The reflectivity of this mirror has been characterized vs aoi at LAPP, as shown in the pre-last figure.

- the Rx sphere, moved to another position than before (since the aoi was 18-19° during O4)

- the temperature and humidity sensor moved behind the sphere.

- the sphere cap and a beam dump to be used during interventions on the bench, also put behind/on the side of the sphere.

Before enabling the power supply (Hameg29) to switch on the sphere, I have switch it off to recover the Ethernet monitoring. Unfortunately, after switch on, the channel 1 was dead (-12 V for the NE_Tx_PD photodiodes), no more providing any voltage. Note that this power supply for NE PCal has already been changed already twice because of similar issue, but on channel 3 instead.

In the afternoon, we have replaced the power supply Hameg29 by the Hameg32. Following an advice from Nicolas Letendre, we have added a cable to connect the grounds of the two pairs of channels: 1 and 2 (providing the -12 V and +12 V for the photodiodes) and 3 and 4 (providing the -15 V and +15 V for the sphere).

Another change, the maximum current setup is now the same as at WE : 0.3 A for channels 1 and 2, and 0.5 A for channels 3 and 4 (while until today it was 0.3 A for the four channels).

Figures 7 to 10 show the NE PCal electronics in the rack, with some vision of the cabling, and the power supply panel information when the laser is off and when the laser is set to 1.3 W. Figure 11 shows the id of the follower-circuit used for the NE Rx sphere (before the ADC).

Figure 6 shows the rear panel of the laser driver: I noticed that the leftmost fan is not working (and another fan seems to be a bit noisy).

**********************************

Figures 12, 13, 14 and 15 are pictures of the WE Pcal rack, id of the WE Rx follower circuit, rear panel of the laser driver, with all fans working, and power supply panel with the laser set at 1.3 W.

**********************************

Figures 10 and 15 show the information about the setup of the NE and WE PCal Hameg power supplies, as well as the current values delivered when the laser is set to 1.3 W.

This afternoon, the status of the HWS-DET SLED and of the flip mirror installed to block the beam on EDB was checked.

It was found that the flip mirror could not be moved either via the VPM or using the local remote control.

The local remote control is normally powered by its dedicated power supply. However, since the corresponding power connection was difficult to identify, the discharged battery was replaced with a new one.

After the battery replacement, the flip mirror became operational again and can now be controlled both remotely and locally.

The nominal power supply of the local remote control still needs to be identified and verified. For the time being, the system is operating correctly using the new battery.

The morning was dedicated to weekly maintenance started at 6:00UTC, here a list of the activity reported to the control room:

- standard vacuum refill from 6:00UTC to 10:00UTC (VAC Team);

- cleaning of central building (Ciardelli with external firm: from 6:00UTC to 10:00UTC);

- TCS: PAM actuator alignment on WI (#69194);

- CAL: north end PCAL switch on and checks;

- PAY: WI payload removal, in progress;

- ENV: Preparation activities for NI instrumented baffle TF measurement;

Air Conditioning

at around 13:30UTC I put back the detection area air conditioning system in "portata ridotta".

SBE

recovery of SDB2 vertical position with F1 step motors from 13:35UTC to 13:45UTC.

We are going to enter the NI tower (Irene, Piernicola, Maria).

Around 08:00 UTC, we started the intervention inside the WI tower (69190). Ettore was inside the tower, Cecilia and Ilaria was under the tower, while Luciano and Diana were at the WI base tower / TCS room.

At the beginning of the activity, the VPM process TCS_PAM_WI_PowerSupply was not working properly, and several signals related to PAM on DD remained grey, including the TX/TY motor positions and the actuator voltage/current signals. Giulio came to help recover the situation.

The WI viewport was reported to be in poor condition (69192).

As reference, we used the information from the previous PAM picomotors calibration performed on April 14th, 2026 (68992).

Following the alignment security procedure prepared by Ilaria and Marco (69189), we switched on the red alignment laser and Ettore checked its position wrt the HR surface of the WI TM. After some alignment steps with the picomotors (Fig. 1), the actuator had been left at the following final motor positions:

-

TX = -21555 steps, corresponding to approximately 3.9 cm downward

-

TY = -4666 steps, corresponding to approximately 0.7 cm to the left

After the alignment, the red laser was switched OFF and the heater was powered, initially at 6 V and then increased up to about 7–7.5 V, corresponding to roughly 4–4.4 A. Ettore eventually managed to see the mask near the viewport (Fig. 2).

The actuator was finally switched off at 09:41 UTC, setting the voltage back to 0 V, and the activity ended.

The DaqBox server has been updated to be able to use the "zero" or "flat"(hold) extension policy for the DAC1955 channels . The DaBox v17r11 release implements this new facility.

This new release has been deployed on the SDB2 LC and SBE control loops and the related Acl 's server has been updated accordly (SDB2_LC and SDB2_SBE)

- operations performed between 2026-06-08-08h29m21-UTC and 2026-06-08-09h02m42-UTC

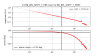

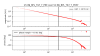

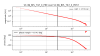

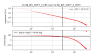

The attached plots compares the SDB2's LVDT_in and LVDT_out channels for the following conditions

- purple: 2026-05-12-02h59m42s-UTC: DAC1955 firmware v2, DaqBox server v17r3p2, Acl linear extension policy, LVDT channels sent to the DAC1955 at 100KHz

- red: 2026-05-13-01h13m04s-UTC: DAC1955 firmware v3, DaqBox server v17r10, FPGA zero extension policy, LVDT channels sent to the DAC1955 at 10KHz

- blue: 2026-06-09-00h26m22s-UTC: DAC1955 firmware v3, DaqBox server v17r11, FPGA flat extension policy, LVDT channels sent to the DAC1955 at 10KHz

- LVDT channels ASD from 0Hz to 25KHz

- LVDT channels ASD zoom around 10KHz

- LVDT channels ASD from 20Hz to 50Hz

The 2 next plots show the trend of the relevant channels over 2 days, from the 2026-06-07 to 2026-06-09, for the SDB2 LC and SBE controls

Since the 2026-06-09-14h-UTC the SBE_SDB2_ACT_F0H started to drift and now is saturating , as consequence the SDB SBE loops are opened

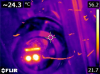

This morning, before proceeding with the alignment of the WI Point Absorber Actuator (PAM) on the HR surface of the mirror, Ettore inspected the large ZnSe viewport (158 mm diameter).

Unfortunately, the viewport shows the same coating degradation already observed on the NI side (69077).

The inspection of the small viewport will be carried out after the payload removal.

Replacing the viewport will require the removal of the WI PAM actuator.

Nevertheless, we proceeded with the actuator alignment as planned (see dedicated entry). Before the viewport replacement, the actuator position will be recorded with respect to the alignment plate, so that it can be reinstalled in the same position afterwards.

Yesterday afternoon (June 8th), around 16h00 utc, I entered inside the detection lab to perform some checks in preparation of a future intervention on the SDB2 minitower (before I entered the lab, Davide Soldani increased the air flux at maximum speed in the detection clean room). During this intervention we would like to use a temporary camera on SDB2 to measure beam size. For this reason I checked the cabling of the EDB_B1t camera.

In particular I unplugged the camera green cable and remove it from the EDB cable tray in order to check if we could reach the SDB2 bench with such a cable, and the result is positive. After this check I reinstalled the green cable on the EDB cable tray and replugged it to the EDB_B1t camera. I also checked after while that the camera is still working.

I measured the needed cable length from the camera board #13 installed on the DAQ box #51 (under the EDB bench) up to the SDB2 minitower and estimated that we need a cable length of 6 m. To be noted that the power supply cable of EDB_B1t camera is 10 m long (according to the sticker attached to the cable). The camera EDB_B1t model is Smartek GC1281XM-S90 (with large power supply connector).

During the inspection of the EDB bench, I noticed that there is cable plugged on the service mezzanine #10 (DAQ box 51) that is not plugged on the other side. This cable is reaching the extremity of the EDB bench (towards SDB2) and could be useful if one wanted to add a photodiode on the EDB bench to monitor the 1064 nm light reflected by the dichroic mirror located on the Hartmann Wavefront sensing beam path.

I profited from my presence in the detection lab to check the 2 inches optics stored in the rack. Here are the optics I could find : W208, W213, W216, W205, W203, W231, W232, V205, V206, V207. There is also an optics contained inside a package labelled W202 but I could not see the SN written on the optics itself. Additionnally, there is a beam splitter uncoated (R=8%), and there seems to be a commercial beam splitter R=90% (not the one we usually install on the detection benches) and there is a laseroptik box containing an optics with the label R=30%.

The WI PAM activity has been finished yesterday, and the laser used for that activity was switched off yesterday (Tuesday) at 9:41 UTC as reported in https://logbook.virgo-gw.eu/virgo/?r=69194