fter discussing it with Walid in the morning, we decided to intervene on the SL fiber alignment to see whether we could recover power at the slave output and improve the cavity alignment toward the Neovan.

We started by switching off the SL and taking additional references of the beam going from the ML toward the neovan (in reflection of the SL), since we had previously observed that the Neovan output power was quite good when it was seeded only by the ML.

We then worked on the alignment inside the SL cavity by acting on the vertical and horizontal axes of both fibers, recovering the alignment with the lenses, trying to maximize the output power. We managed to recover about 0.34 V, starting from 0.32 V. We could not go higher and get back to the 0.38V because both axes of the lens on the western side had reached the end of their coarse adjustment range.

From there, having only 10% missing, we started to check the alignment toward the Neovan, and it was better than what we had yesterday. We had to slightly adjust the tilt by acting M0.4 (second mirror at SL output).

We injected the SL into the Neovan and slowly increased its power using the IPC between the two.

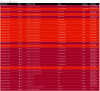

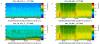

We eventually recovered 0.35 V on AMP_DC, which is better than what we had before. From the AMP diagbox QPD signals, the beam appears to be mainly shifted horizontally compared to its previous position (figure). In addition, the temperature of the Neovan head is slightly higher, around 31°C instead of 30°C.

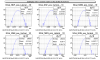

We did not try to realign the PMC for now, but looking at the peaks on PMC_TRA, it appeared to be misaligned, as expected given the signals on the AMP QPD.

For the weekend, we decided to flip the mirror between the SL and the Neovan and reduce the current of the Neovan pumping diodes to avoid the head to overheat. We will monitor the thermal behavior of the SL over the weekend.

The next steps are to reinject again the SL into the Neovan, check whether a slight alignment of the beam inside the Neovan could further increase the output power, and realign the PMC in order to eventually try locking it.