The spare part has been installed and then the pumpdown was started. The cryotrap is now under high vacuum, and the cooldown is foreseen from next tuesday.

A few more observations concerning the test of April 23:

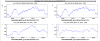

- at some point, while the AHU was off, we stopped the water flux by closing the valves located inside the CEB AHU room. Unespectly we measured an increased acoustic noise in the range 50 to 100 Hz (Figure 1)

- between 8:49 and 11:13 UTC, while the AHU was off, we closed all air valves of ducts (all set to 0%). In principle we would expect some change in the position of low frequency spectral peaks, if they were associated to acoustic modes of the air ducts. We did not notice any change (Figure 2).

- acoustic spectrograms show some peaks changing frequency, mostly above a few hundred Hz. They seem correlated to the frequency of the SUPPLY fan (Figures 3 and 4). Yet, we then notice that similar ones are present also when the HVAC was running in MANUAL modes (that is when both fans frequency fixed): Figure 5. Could they be associated to the air flow regulators instead?

- IMC alignment and measurement of the new AA sensing matrix

- IMC longitudinal wp. The offset on the IMC PDH error signal appears to be quite large (-1.6 V, see attached plot). However, with this offset the IMC lock is very unstable, so we left the old one -0.07 V. To be understood why the scan gives such large offset (maybe on monday we will check the alignment in the EOM? on the IMC refl photodiode?)

- IMC Fmoderr. We made it with the automation and it was very close to the optimal wp

- IMC angular wp --> we found an issue on the NF V RF quadrant. We did the usual scans of the galvo working points (scanning the offsets of the galvos) and look at the coupling between error signal and frequency noise (TF between perturb at 1111Hz and the Quadrant signal (the demodulated quadrature I). We observed a strange behaviour for te NF V (non linearity: changes slope around the 0). See plots 2 and 3 which compare current scan (30 Apr 13:17:02 UTC) with an old one (10 Apr 2026 10:03:10 UTC): the magnitude doesn't go to zero and the phase behaviour looks strange. We then went in the LL Atrium and unpowered and powered again the NF QPD electronic box and made another scan at utc2gps(2026,04,30,15,05,08) from -0.5 to 0.5 in 300s (plot 6 and 7), which was still bad. To be investigated next week.

- IMC OLTF we found 96 kHz UGF and 30 deg phase margin. We added 1 dB to get 107 kHz and 23 deg phase margin (plot 8)

In the meanwhile we prepared the Acl code ISYS_BPL for the bench pointing loop. We still have to plug the PZTs to the DAC (we need prior to find a solution to block the beam before the EOMs while testing the PZTs, since the risk would be to burn something)

Here attached some pictures of the EIB after the installation.

Since yesterday 12:00 LT (April 29) the DET AHU is running in MANUAL mode (fixed fan frequencies) at slightly reduced rate of the SUPPLY fan (28.5 Hz instead of usual 31.5). Ambient parameters (TE, HU, PRES) look stable enough (see attached) so we decide to keep it in this configuration untill Monday.

ITF in DOWN, UPGRADING

This morning the INJ team worked on beam realignment and RFC locking. The MC valve was opened by the vacuum group.

After the operations in laser lab, hvac pressure system working point was set back to normal and BACnetServer was restarted.

At 12:00 UTC the vacuum group started venting NI tower

- today upgraded versions of the quadrant photodiodes for the bench pointing loop have been installed. In fact yesterday Piernicola and Flavio found out that the excess of noise on the QPD signals was probably due to the length of the cables.

The two QPDs have then been realigned to have DC and asymmetries close to 0 (plot 1) and plot 2 shows the noise of the QPDs without any laser beam.

- In order to close the BPC we had to move the picomotors on M6 and M8 mirrors on EIB. We found that the driver for the M8 tx ty picomotors doesn't work anymore. We temporarly plugged M8 tx and ty on Far M1 ty an tx respectively. We could then close the loop

- we finally struggled to lock the IMC since we had not realized that the valve between SIB1 and MC had been closed (we thought the beam was misaligned instead).

Tomorrow we will continue with the INJ recovery and precommission of the quadrants on EIB.

*** Erratum ***

Due to an offset between the Kieback & Peter interface and the inverter, the supply and return fan speeds reported were incorrect.

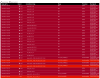

The values in the table below have been updated, and an additional frequency step has been added.

| Time | Mode | Supply fan frequency | Return fan frequency | Note |

| April 27, 12:30 UTC | Automatic | ~32 Hz | ~13.8 Hz | Supply: air speed ~2.6m/s → Air flux~3276 m³/h Return: air speed ~0.2 m/s → Air flux~252 m³/h |

| April 27, 16:10 UTC | Manual | ~22 Hz | ~15.7 Hz | Supply: air speed ~2.5m/s → Air flux~3150 m³/h Return: air speed ~0.3 m/s → Air flux~378 m³/h |

| April 28, 09:40 UTC | Automatic | ~32.8 Hz | ~13.8 Hz | |

| April 28, 15:00 UTC | Manual | ~22 Hz | ~15.7 Hz | |

| April 29, 10:36 UTC | Manual | ~28.5 Hz | ~13.8 Hz | Supply: air speed ~3m/s → Air flux~3780 m³/h Return: air speed ~0.3 m/s → Air flux~378 m³/h |

ITF found DOWN in UPGRADING mode.

he planned activity on Free space ALS / LB->EIB pointing went on for the whole shift.

Software

13:10 UTC: SUSP_Fb restarted after a crash

In order to debug the new QPDs installed for the BPL loop, which show anomalous noise in both DC and asymmetries signals, we removed from EIB bench one of the new QPD (SN: DCQPD26-1) and from EAB bench the QPD used for IBJM (SN: DCQPD 4).

For the time being, EIB bench is blocked and the PMC in scan with the beam blocked on LB.

In the table below are reported the latest actions performed on the supply and return fan operation.

| Time | Mode | Supply fan frequency | Return fan frequency | Note |

| April 27, 12:30 UTC | Automatic | ~32 Hz | ~13.8 Hz | Supply: air speed ~2.6m/s → Air flux~3276 m³/h Return: air speed ~0.2 m/s → Air flux~252 m³/h |

| April 27, 16:10 UTC | Manual | ~28.5 Hz | ~13.8 Hz | Supply: air speed ~2.5m/s → Air flux~3150 m³/h Return: air speed ~0.3 m/s → Air flux~378 m³/h |

| April 28, 09:40 UTC | Automatic | ~32.8 Hz | ~13.8 Hz | |

| April 28, 15:00 UTC | Manual | ~28.5 Hz | ~13.8 Hz |

In Figure 1, a slight temperature drift of approximately 0.1–0.2 °C is observed over time, together with oscillatory behavior in the humidity control loop.

The morning was dedicated to weekly maintenance started at 6:00UTC, here a list of the activity reported to the control room:

- standard vacuum refill from 6:00UTC to 10:00UTC (VAC Team);

- cleaning of central and west end buildings (Menzione with external firm: from 6:00UTC to 10:00UTC);

- ALS: free space beating installation (#69042);

- SBE: EIB balancing (#69045);

- SQZ: removal of the fiber noise box (#69047);

- TCS: chiller check and refill (Menzione 7:15UTC);

thermal camera reference 8:00UTC;

the work on injection system is still in progress...

Today I removed the “Fiber Noise box 2” in the Atrium used for the phase noise suppression loop. The goal would be to bring it to Padova to upgrade it. In particular, the idea is to actively -stabilize the plate that houses the fibers and, if needed, shorten the fibers using the fiber splicing.

Activity on detLab:

First I connected the output of the fiber going to the squeezer directly to the SQZ main PLL. This way, the retroreflector is excluded; otherwise, it would have reflected about 50% of the power back toward the injection (see Fig1).

In this configuration the power reaching the PLL from the injection is doubled . This is evident from the DC value of the PLL photodiodes (see Fig2)

Activity on EE-Room

I turned off the RF amplifier that powered the AOM in the fiber noise box 2 . The amplifier was then removed and will be taken to Padova along with the box.

Activity in the Atrium

After disconnecting the cables and the optical fibers from the Fiber noise Box 2 , I removed the box. The optical fiber that powered the box is now directly connected to the fiber that carries the signal to detection. This connection was made by inserting a 10 dB Thorlabs fiber attenuator to keep the power reaching the squeezer approximately the same (figure 1). After this operation, the DC value of the photodiodes slightly increased to above 0.30 V (figure 3).

The disconnected cables in the atrium are labelled as

“Fiber Box” RF amplifiers power supply

“Fiber Box LO Sin” RF output of the first amplifier in the box

“FiberBox LO Des” RF output of the second amplifier in the box

“Fiber Box LO AOM” RF power to the AOM in the box

Before the intervention of Henk Jan, we tried to rebalance the bench moving some weights on the bench and adding some more (around 3kgs). We had also to take off some weights of under the bench (5kg) in the upper right side of EIB (corner near SIB2 in SIB1 direction). At the end it was not possible to rebalance only with the weights and we asked for the expert intervention.

This morning around 8:50 UTC a resistance box provided by the electronic team was connected to the piezo driver controller of the BPL mirrors (INJ_BPL_QD1/2_H/V) in EEroom. Attached the FFT of the signals before and after installation.

Before the intervention of Henk Jan, we tried to rebalance the bench moving some weights on the bench and adding some more (around 3kgs). We had also to take off some weights of under the bench (5kg) in the upper right side of EIB (corner near SIB2 in SIB1 direction). At the end it was not possible to rebalance only with the weights and we asked for the expert intervention.

One week ago (Monday April 20th) at 6:50 UTC (8:50 LT) a magnetic comb with 1Hz spacing turned on, and it has been on since then. The origin is still unknown. The noise is mostly affecting up to few tens of Hz, and has the characteristics of a clock driven noise, yet it is somehow intense and widespread. Here is what we observed so far:

- the noise was first noticed in the external magnetometer (thanks Renato)

- the source is most likely DC-powered since the noise is not consistent with 50 Hz sidebands

- comb lines are very narrow, with at least ms stability, and this points to some clock driven device.

- the noise is louder (a factor 10 to 100 louder than EXT) in CEB magnetometers and in magnetometers close to towers. NI, WI, BS are the loudest.

- the noise is present also in the NE and WE magnetometers (curious!) where it started at the same time as for CEB

- no evidence of noise is seen in any VOLT, CURR sensor (UPS or IPS), as well as nothing in the grounding monitor

- the ELECTRIC monitor seems to show a factor two increase in the amplitude of lines at 1,2,3,4 Hz.

- Large coils are all off: VPM processes tell that all coils relay are set to 0, and in addition CURR and VOLT coils monitors do not see any noise

- the logbook does not report any concurrent operation

- VAC team and Davide are not aware of any new hardware or operation at that time

- the VPM log does not report any action at that time

This morning we finished the work on EIB for the ALS part. The next step will be to commission the beating once we will have the green from the arms.

- We calibrated the monitor photodiodes and connected to the ADC the RF photodiodes. The IR pickoff is around 1,16 W and 26 mW of green beam are produced (1st attached plot).

- The IR pickof arrives to the end buildings and the green is produced (plot 3).

- The total added weight for the ALS part is around 19kg. In the attached pdf there is the list of the installed optics. To be considered that 21.1 kg had been removed at the beginning of the installation (IBMS setup, not used anymore).

- We decided to create two different paths to independently align the two reference beams into the N and W RF photodiodes (see photo). We did not touch the optics for the beams coming from the arms in order to keep the reference. The beating will be aligned once we will have the green from the ends.

The size of the beam at the level of the collimators was 1500 um instead of the 1800 um required for the coupler Thorlabs PAF2A 11 A, so we adjusted the lens after the crystal to get the right beam size.

We got:

- 1 mw /1.3 mW for the north --> 77% coupling efficiency

- 180/220 uw for the west --> 80% coupling efficiency

Then we tuned the waveplate to have the same power on the two paths --> 740 uW on each

- From the main ALS pickoff at the beginning of the EIB we get max 1.15W of IR power, which is converted into the crystal to 27.5 mW green --> 2% coupling efficiency

- The IR pickoff to the DAQ room has been optimized again (since we moved the lens after the crystal): 270 mW/400 mW in the IR pickoff --> 67% coupling efficiency. The fiber has been connected and we send 35mW of IR to the DAQ room box (to be split and sent to WEB and NEB). This was the same value as before (chosen since more power would induce Brillouin scattering).

ITF found DOWN in UPGRADING mnode.

- The planned activity on Free space ALS / LB->EIB pointing wento on for the whole shift.

- NI cryotrap repaired with a replacement of a link part. Activity still in progress...

##entry of last Friday lost in the drafts##

We continued the alignment of the green beam towards the North/West fiber couplers. We were able to follow the path of the counter-propagated green laser and finally reach both fiber couplers.

After several attempts, we successfully injected the reference beam into one of the two fibers (the one corresponding to the North Arm) with ~30% coupling efficiency. Unfortunately, on the other fiber we could only achieve ~0.5% coupling (without further touching the alignment). This suggests that the axes of the beams coming from the two arms are not perfectly superimposed.

However, a fine adjustment will be carried out on Monday using the two mirrors equipped with picomotors (see Fig. 1).

Side note:

- We temporary connected the picomotors of the two ALS mirrors (used to align the reference beam) to the "picoAppEIB" server. For the time being, the first mirorr (bottom circle on fig. 1) is controlled using "FAR_M1_{Tx, Ty}" DoF, the second one (top circle on fig. 1) using "M8_{Tx,Ty}".

- We added the control of the flip mirror for the reference green beam (violet circle on fig. 1). It is now connected to the TTL_inj server in the flip mirror TCS rack of the piscina, on the channel 5 (green cable in fig. 2).

- In addition, we also prepared two additional flip mirror cables (yellow and blu ones), which may be used in the future on the main beam path.

During one week (from 2026-04-17-06h20m15 until 2026-04-24-07h13m06-UTC) there were 2 instances of the same process (BACnetServer) running at the same time, explaining caotic loss of data at level of slow frame builder zFbsInf.

First trial of starting duplicated process was correctly excluded, and rejected by Cm:

2026-04-17-06h19m01-UTC>INFO...-Cm> Name already used.

2026-04-17-06h19m01-UTC>INFO...-Cm> Error in server initialization.

However following insisting trials were not excluded.

The (old) instance was killed manually around 2026-04-24-07h13m06-UTC, when a new process of BACnetServer was restarted properly.

Independently, zFbsInf was restarted a bit later at 2026-04-24-07h24m51-UTC, insuring clearing the problem in the data transmission chain.

Everything back to normal.

The side supports (ears) have been glued onto the two CPs.